ethlambda: how we got a 3x speedup in signature aggregation

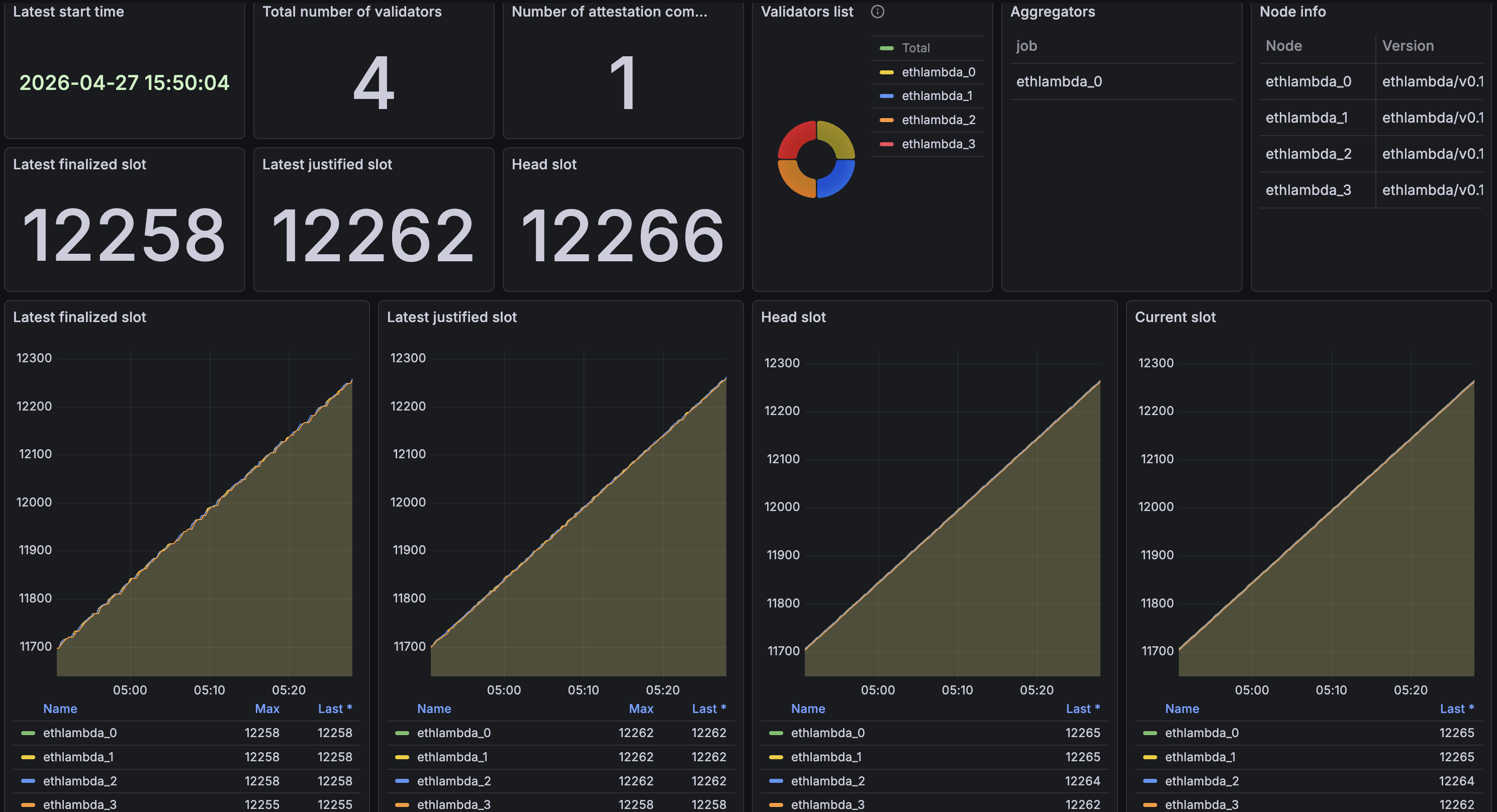

Devnet 4 of the post-quantum leanConsensus testnet introduced recursive signature aggregation through leanMultisig. The change is good for the network (fewer proofs to verify per block), but it pushed enough work onto aggregators that our node started missing slots and slipping on finalization.

Two changes got us back to parity: moving aggregation off the hot path, and tightening the compile target. Aggregation time dropped ~3x, and we stopped missing slots.

Recursive aggregation in devnet 4

leanConsensus uses leanMultisig, a post-quantum signature scheme. Earlier devnets verified one proof per attestation. Devnet 4 added recursive aggregation: aggregators fold many proofs into a single recursive proof, and validators verify one proof per block instead of many.

The tradeoff: cheaper verification, more expensive aggregation.

The problem

When running a 4-ethlambda devnet in our server, we saw two symptoms:

- The aggregator missed block proposals on most of their slots.

- Finalization advanced in jumps.

Both pointed to aggregation taking longer than the slot itself. Grafana showed aggregation times pinned at the 4s bucket, the entire slot. The histogram was bucketized, so we added per-proof timing to the logs to read the real value.

| Metric | Value |

|---|---|

| Slot duration | 4s |

| Sub-slot interval | 800ms |

| Mean per-proof aggregation time | 2.3s |

| ≈ Sub-slot intervals consumed per proof | ~3 of 5 |

A single proof was eating most of a slot, making the aggregator drop any other validator duties it had. This was exacerbated by maybe more than one aggregation being performed in a slot, which could mean the aggregator was practically unresponsive for the whole slot.

Asynchronous aggregation

Aggregation was running on the same task driving the rest of the node, breaking the cooperative concurrency of asynchronous programming. While it crunched a proof, slot ticks and validator duties waited behind it.

We moved aggregation onto a background task (#299) with a 750 ms soft deadline. The node schedules a proof, goes back to its other duties, and publishes the result when it's ready. After the deadline, no new jobs start, but proofs already in flight still complete and get broadcast to the network.

Our aggregators stopped missing proposals, and the finalization curve smoothed out.

In ethlambda, the aggregation worker lives in

crates/blockchain/src/aggregation.rs, with session lifecycle (start, deadline, cancel, finalize) managed by theBlockChainServeractor incrates/blockchain/src/lib.rs. A session id fences late results, so a stuck worker from a previous slot can't poison the current one.

Changed compile target to x86-64-v3

Someone from the leanConsensus community mentioned that compiling leanMultisig for the native architecture gave a ~2x speedup in their benchmarks. We tried it on our Skylake-SP devnet server over a 400-slot run and saw a 2.7x mean reduction in aggregation time.

-C target-cpu=native has a downside: the binary stops being portable across most x86-64 machines. We tried x86-64-v3 instead (#311), a feature level most CPUs from 2013 onward (Haswell and newer) support, broad enough to ship one binary across our hosts. We borrowed this from ethrex, our production-ready execution client, which made the same switch.

| Compile target | Mean reduction | Portable across our hosts |

|---|---|---|

x86-64 (default) |

1x | ✅ |

native |

2.7x | ❌ |

x86-64-v3 |

3.1x | ✅ |

x86-64-v3 outperformed native on the same hardware, which surprised us at first. The reason is probably a CPU quirk. Under native, the compiler emits AVX-512 instructions, and AVX-512 triggers core downclocking on this CPU class. x86-64-v3 caps the binary at AVX2, so the core holds its full clock and ends up faster. A CPU without AVX-512 wouldn't show this gap.

Results

With async aggregation and x86-64-v3 in place, our devnet 4 runs match the throughput we had before recursive aggregation, with the verifier-side savings recursion promised on top.

ethlambda is open source and currently running in devnet 4: